The Faculty of Computer and Information Science at the University of Ljubljana and MathWorks have signed a cooperation agreement. The company will integrate the LOCA (Low-shot Object Counting network with iterative prototype Adaptation) method, developed by members of the Visual Cognitive Systems Laboratory (Nikola Đukić, Assoc. Prof. Dr. Alan Lukežič and Vitjan Zavrtanik) under the leadership of Prof. Matej Kristan, into the distribution of the MATLAB computer package.

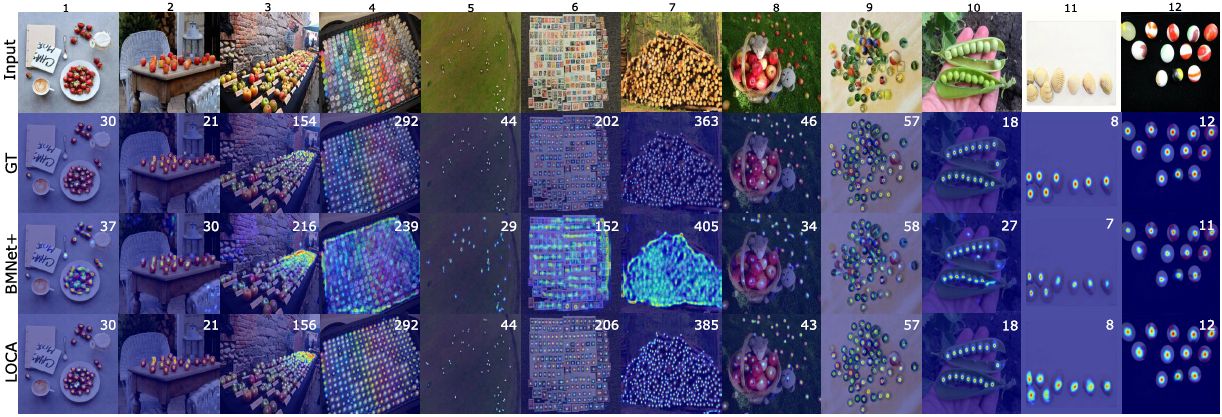

LOCA is an advanced machine learning method for counting objects with few or no training examples. The user labels only a few examples of a selected object category in an image, say three, and LOCA simultaneously learns a detection model and counts all other objects of the same category in the image. In addition to the interest of the research problem, LOCA is practically useful in many applications where the large datasets needed to learn classical detection algorithms are not available, such as in biological research. On standard datasets, LOCA outperforms related methods with up to 30% less error, and has been ranked as the best method on the Papers-With-Code online platform for more than a year.

LOCA was presented at the prestigious ICCV2023 computer vision conference and is freely available on GitHub. Further development promises even better results, and the next generation of the method will be presented at the CVPR2024 conference in Seattle.

In the highly competitive environment of computer vision, the success of deep model research such as LOCA depends heavily on access to specialised computing facilities. The Vega supercomputer and the Arnes supercomputing cluster have therefore played a key role in the development of LOCA, providing researchers with free access to the facilities and enabling accelerated development.

You can find out more about the object counting methods being developed in the Visual Cognitive Systems Laboratory on the website: https://www.vicos.si/research/object-counting/